Shape/texture bias in brains and machines

With: Zejin Lu,

Radoslaw Cichy,

Tim Kietzmann

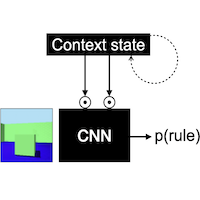

Summary: Building off

Geirhos et al. 2018, where CNNs were shown to be texture-biased as compared to humans, we redefine the shape bias metric and assess the influence of recurrence and development on the shape bias in RNNs, humans, and macaques.

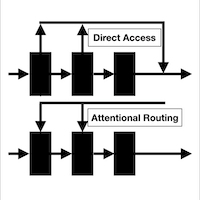

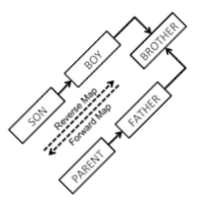

Relational representations via glimpse prediction

With: Linda Ventura,

Tim Kietzmann, et al.

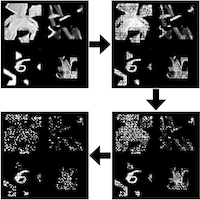

Summary: Inspired by

Summerfield et al. 2020, research on RF remapping, and predictive vision, we evaluate the usefulness of predicting the content of the next glimpse towards generating scene representations that bear relational information about its constituents.

Representational drift in macaque visual cortex

With: Daniel Anthes,

Peter König,

Tim Kietzmann

Summary: Employing tools developed during our investigations into continual learning, we study if representational drift occurs in macaque visual cortex and how that multi-area system deals with changing representations.

Brain reading with a Transformer

With: Victoria Bosch,

Tim Kietzmann, et al.

Summary: Using fMRI responses to natural scenes to condition the sentence generation in a Transformer, we study the neural underpinnings of scene semantics (objects and their relationships) encoded in natural language.

Comments: Preliminary results were presented at CCN'24

Perception of rare inverted letters among upright ones

With: Jochem Koopmans

With: Jochem Koopmans,

Genevieve Quek,

Marius Peelen

Summary: In a Sperling-like task where the letters are mostly upright, there is a general tendency to report occasionally-present and absent inverted letters as upright to the same extent. Previously reported expectation-driven illusions might be post-perceptual.

Comments: Jochem's masters thesis. Paper in prep.

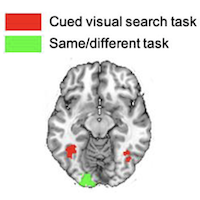

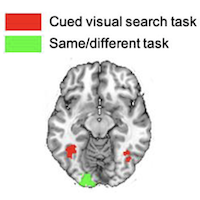

Task-dependent characteristics of neural multi-object processing

With: Lu-Chun Yeh

With: Lu-Chun Yeh,

Marius Peelen

Summary: The association between the neural processing of multi-object displays and the representations of those objects presented in isolation is task-dependent: same/different judgement relates to earlier, and object search to later stages in MEG/fMRI signals.

Publication: JNeurosci'24 paper

Comments: JNeurosci paper in brief

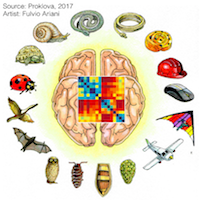

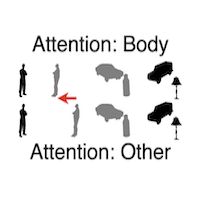

The nature of the animacy organization in human ventral temporal cortex

With: Daria Proklova

With: Daria Proklova,

Daniel Kaiser,

Marius Peelen

Summary: The animacy organisation in the ventral temporal cortex is not fully driven by visual feature differences (modelled with a CNN). It also depends on non-visual (inferred) factors such as agency (quantified through a behavioural task).

Publications: eLife'19 paper,

Masters Thesis

Comments: Masters thesis in brief,

eLife paper in brief

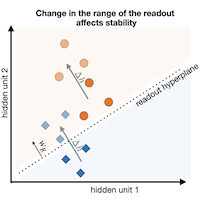

With: Rowan Sommers, Daniel Anthes, Tim Kietzmann

With: Rowan Sommers, Daniel Anthes, Tim Kietzmann With: Daniel Anthes, Peter König, Tim Kietzmann

With: Daniel Anthes, Peter König, Tim Kietzmann With: Genevieve Quek, Marius Peelen

With: Genevieve Quek, Marius Peelen With: Johannes Singer, Radoslaw Cichy, Tim Kietzmann

With: Johannes Singer, Radoslaw Cichy, Tim Kietzmann With: Lotta Piefke, Adrien Doerig, Tim Kietzmann

With: Lotta Piefke, Adrien Doerig, Tim Kietzmann With: Surya Gayet, Marius Peelen, et al.

With: Surya Gayet, Marius Peelen, et al. With: Marius Peelen

With: Marius Peelen With: Giacomo Aldegheri, Marcel van Gerven, Marius Peelen

With: Giacomo Aldegheri, Marcel van Gerven, Marius Peelen With: Ilze Thoonen, Sjoerd Meijer, Marius Peelen

With: Ilze Thoonen, Sjoerd Meijer, Marius Peelen With: Adrien Doerig, Tim Kietzmann

With: Adrien Doerig, Tim Kietzmann With: Giacomo Aldegheri, Tim Kietzmann

With: Giacomo Aldegheri, Tim Kietzmann With: Jochem Koopmans, Genevieve Quek, Marius Peelen

With: Jochem Koopmans, Genevieve Quek, Marius Peelen With: Lu-Chun Yeh, Marius Peelen

With: Lu-Chun Yeh, Marius Peelen With: Daria Proklova, Daniel Kaiser, Marius Peelen

With: Daria Proklova, Daniel Kaiser, Marius Peelen With: Varad Choudhari

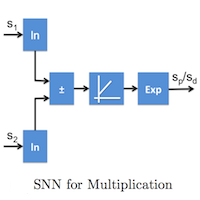

With: Varad Choudhari  With: Sukanya Patil, Bipin Rajendran

With: Sukanya Patil, Bipin Rajendran